Add These 10 Mangets To Your Deepseek

페이지 정보

본문

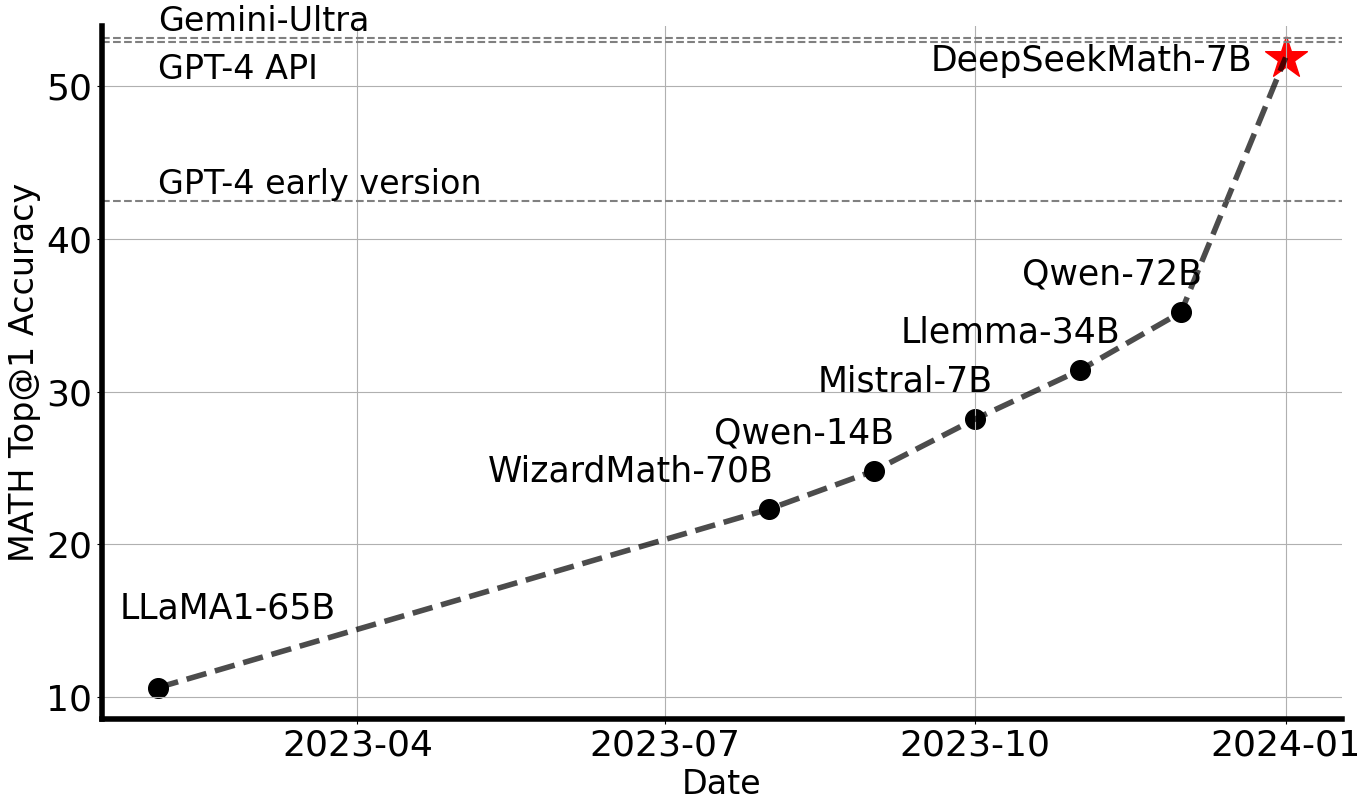

• We introduce an modern methodology to distill reasoning capabilities from the lengthy-Chain-of-Thought (CoT) model, specifically from one of the DeepSeek R1 collection fashions, into standard LLMs, notably DeepSeek-V3. Despite its wonderful efficiency, DeepSeek-V3 requires solely 2.788M H800 GPU hours for its full training. For example, a 175 billion parameter model that requires 512 GB - 1 TB of RAM in FP32 could probably be reduced to 256 GB - 512 GB of RAM through the use of FP16. You need to use GGUF models from Python using the llama-cpp-python or ctransformers libraries. They are additionally suitable with many third occasion UIs and libraries - please see the listing at the highest of this README. Chinese AI startup DeepSeek launches DeepSeek-V3, a massive 671-billion parameter model, shattering benchmarks and rivaling top proprietary programs. Likewise, the corporate recruits people without any computer science background to help its technology understand different subjects and knowledge areas, together with having the ability to generate poetry and carry out properly on the notoriously difficult Chinese school admissions exams (Gaokao). Such AIS-linked accounts were subsequently found to have used the entry they gained through their rankings to derive knowledge essential to the production of chemical and biological weapons. After you have obtained an API key, you possibly can entry the DeepSeek API using the next instance scripts.

Be certain that you are using llama.cpp from commit d0cee0d or later. Companies that almost all efficiently transition to AI will blow the competition away; a few of these companies may have a moat & proceed to make excessive earnings. R1 is critical because it broadly matches OpenAI’s o1 model on a range of reasoning tasks and challenges the notion that Western AI corporations hold a major lead over Chinese ones. Compared with DeepSeek-V2, we optimize the pre-coaching corpus by enhancing the ratio of mathematical and programming samples, whereas expanding multilingual coverage past English and deepseek ai Chinese. But Chinese AI growth agency free deepseek has disrupted that notion. Second, when DeepSeek developed MLA, they needed to add other issues (for eg having a weird concatenation of positional encodings and no positional encodings) past just projecting the keys and values because of RoPE. Super-blocks with 16 blocks, each block having sixteen weights. K - "type-0" 3-bit quantization in tremendous-blocks containing 16 blocks, every block having 16 weights. K - "kind-1" 2-bit quantization in tremendous-blocks containing 16 blocks, every block having sixteen weight. K - "type-1" 5-bit quantization. It doesn’t tell you all the things, and it might not keep your data protected.

Be certain that you are using llama.cpp from commit d0cee0d or later. Companies that almost all efficiently transition to AI will blow the competition away; a few of these companies may have a moat & proceed to make excessive earnings. R1 is critical because it broadly matches OpenAI’s o1 model on a range of reasoning tasks and challenges the notion that Western AI corporations hold a major lead over Chinese ones. Compared with DeepSeek-V2, we optimize the pre-coaching corpus by enhancing the ratio of mathematical and programming samples, whereas expanding multilingual coverage past English and deepseek ai Chinese. But Chinese AI growth agency free deepseek has disrupted that notion. Second, when DeepSeek developed MLA, they needed to add other issues (for eg having a weird concatenation of positional encodings and no positional encodings) past just projecting the keys and values because of RoPE. Super-blocks with 16 blocks, each block having sixteen weights. K - "type-0" 3-bit quantization in tremendous-blocks containing 16 blocks, every block having 16 weights. K - "kind-1" 2-bit quantization in tremendous-blocks containing 16 blocks, every block having sixteen weight. K - "type-1" 5-bit quantization. It doesn’t tell you all the things, and it might not keep your data protected.

After all they aren’t going to inform the whole story, however maybe solving REBUS stuff (with related careful vetting of dataset and an avoidance of a lot few-shot prompting) will truly correlate to meaningful generalization in models? Hearken to this story a company primarily based in China which goals to "unravel the thriller of AGI with curiosity has launched DeepSeek LLM, a 67 billion parameter mannequin skilled meticulously from scratch on a dataset consisting of two trillion tokens. The corporate also launched some "DeepSeek-R1-Distill" models, which are not initialized on V3-Base, however as a substitute are initialized from different pretrained open-weight fashions, including LLaMA and Qwen, then nice-tuned on artificial knowledge generated by R1. Models are released as sharded safetensors files. This repo accommodates GGUF format model recordsdata for DeepSeek's Deepseek Coder 1.3B Instruct. These recordsdata have been quantised utilizing hardware kindly supplied by Massed Compute. First, we tried some fashions utilizing Jan AI, which has a pleasant UI. From a extra detailed perspective, we examine deepseek ai-V3-Base with the other open-source base fashions individually.

A extra speculative prediction is that we'll see a RoPE substitute or no less than a variant. Will macroeconimcs limit the developement of AI? Rust ML framework with a focus on performance, including GPU assist, and ease of use. Building upon widely adopted techniques in low-precision coaching (Kalamkar et al., 2019; Narang et al., 2017), we propose a mixed precision framework for FP8 coaching. Through the assist for FP8 computation and storage, we obtain both accelerated training and reduced GPU reminiscence usage. Lastly, we emphasize again the economical coaching costs of DeepSeek-V3, summarized in Table 1, achieved via our optimized co-design of algorithms, frameworks, and hardware. Which LLM model is best for producing Rust code? This a part of the code handles potential errors from string parsing and factorial computation gracefully. 1. Error Handling: The factorial calculation could fail if the enter string cannot be parsed into an integer. We ran multiple large language fashions(LLM) regionally in order to figure out which one is the most effective at Rust programming. Now we now have Ollama operating, let’s try out some models.

A extra speculative prediction is that we'll see a RoPE substitute or no less than a variant. Will macroeconimcs limit the developement of AI? Rust ML framework with a focus on performance, including GPU assist, and ease of use. Building upon widely adopted techniques in low-precision coaching (Kalamkar et al., 2019; Narang et al., 2017), we propose a mixed precision framework for FP8 coaching. Through the assist for FP8 computation and storage, we obtain both accelerated training and reduced GPU reminiscence usage. Lastly, we emphasize again the economical coaching costs of DeepSeek-V3, summarized in Table 1, achieved via our optimized co-design of algorithms, frameworks, and hardware. Which LLM model is best for producing Rust code? This a part of the code handles potential errors from string parsing and factorial computation gracefully. 1. Error Handling: The factorial calculation could fail if the enter string cannot be parsed into an integer. We ran multiple large language fashions(LLM) regionally in order to figure out which one is the most effective at Rust programming. Now we now have Ollama operating, let’s try out some models.

- 이전글Five Killer Quora Answers On Mini Cot Beds 25.02.01

- 다음글Consider In Your Deepseek Expertise But By no means Cease Improving 25.02.01

댓글목록

등록된 댓글이 없습니다.