Addmeto (Addmeto) @ Tele.ga

페이지 정보

본문

How a lot did DeepSeek stockpile, smuggle, or innovate its way round U.S. The best solution to sustain has been r/LocalLLaMa. DeepSeek, nevertheless, just demonstrated that another route is obtainable: heavy optimization can produce remarkable outcomes on weaker hardware and with lower memory bandwidth; merely paying Nvidia extra isn’t the only technique to make higher models. US stocks dropped sharply Monday - and chipmaker Nvidia misplaced almost $600 billion in market worth - after a shock advancement from a Chinese artificial intelligence company, DeepSeek, threatened the aura of invincibility surrounding America’s know-how trade. DeepSeek, but to succeed in that stage, has a promising street ahead in the sector of writing help with AI, especially in multilingual and technical contents. As the field of code intelligence continues to evolve, papers like this one will play a crucial position in shaping the way forward for AI-powered instruments for builders and researchers. 2 or later vits, but by the time i noticed tortoise-tts also succeed with diffusion I realized "okay this discipline is solved now too.

How a lot did DeepSeek stockpile, smuggle, or innovate its way round U.S. The best solution to sustain has been r/LocalLLaMa. DeepSeek, nevertheless, just demonstrated that another route is obtainable: heavy optimization can produce remarkable outcomes on weaker hardware and with lower memory bandwidth; merely paying Nvidia extra isn’t the only technique to make higher models. US stocks dropped sharply Monday - and chipmaker Nvidia misplaced almost $600 billion in market worth - after a shock advancement from a Chinese artificial intelligence company, DeepSeek, threatened the aura of invincibility surrounding America’s know-how trade. DeepSeek, but to succeed in that stage, has a promising street ahead in the sector of writing help with AI, especially in multilingual and technical contents. As the field of code intelligence continues to evolve, papers like this one will play a crucial position in shaping the way forward for AI-powered instruments for builders and researchers. 2 or later vits, but by the time i noticed tortoise-tts also succeed with diffusion I realized "okay this discipline is solved now too.

The goal is to update an LLM in order that it may well solve these programming tasks with out being supplied the documentation for the API changes at inference time. The benchmark involves artificial API perform updates paired with programming duties that require utilizing the up to date performance, difficult the mannequin to motive in regards to the semantic changes somewhat than simply reproducing syntax. This paper presents a new benchmark known as CodeUpdateArena to evaluate how effectively giant language models (LLMs) can replace their information about evolving code APIs, a vital limitation of current approaches. However, the paper acknowledges some potential limitations of the benchmark. Furthermore, existing knowledge modifying methods even have substantial room for enchancment on this benchmark. Further research can be wanted to develop simpler strategies for enabling LLMs to replace their knowledge about code APIs. Last week, research agency Wiz discovered that an inner DeepSeek database was publicly accessible "within minutes" of conducting a safety check.

After DeepSeek's app rocketed to the top of Apple's App Store this week, the Chinese AI lab became the speak of the tech trade. What the new new Chinese AI product means - and what it doesn’t. COVID created a collective trauma that many Chinese are nonetheless processing. In K. Inui, J. Jiang, V. Ng, and X. Wan, editors, Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing and the 9th International Joint Conference on Natural Language Processing (EMNLP-IJCNLP), pages 5883-5889, Hong Kong, China, Nov. 2019. Association for Computational Linguistics. As the demand for advanced large language models (LLMs) grows, so do the challenges related to their deployment. The CodeUpdateArena benchmark represents an necessary step ahead in assessing the capabilities of LLMs within the code technology area, and the insights from this analysis might help drive the development of extra sturdy and adaptable models that may keep pace with the rapidly evolving software program landscape. Overall, the CodeUpdateArena benchmark represents an necessary contribution to the continued efforts to enhance the code generation capabilities of massive language models and make them extra sturdy to the evolving nature of software program growth.

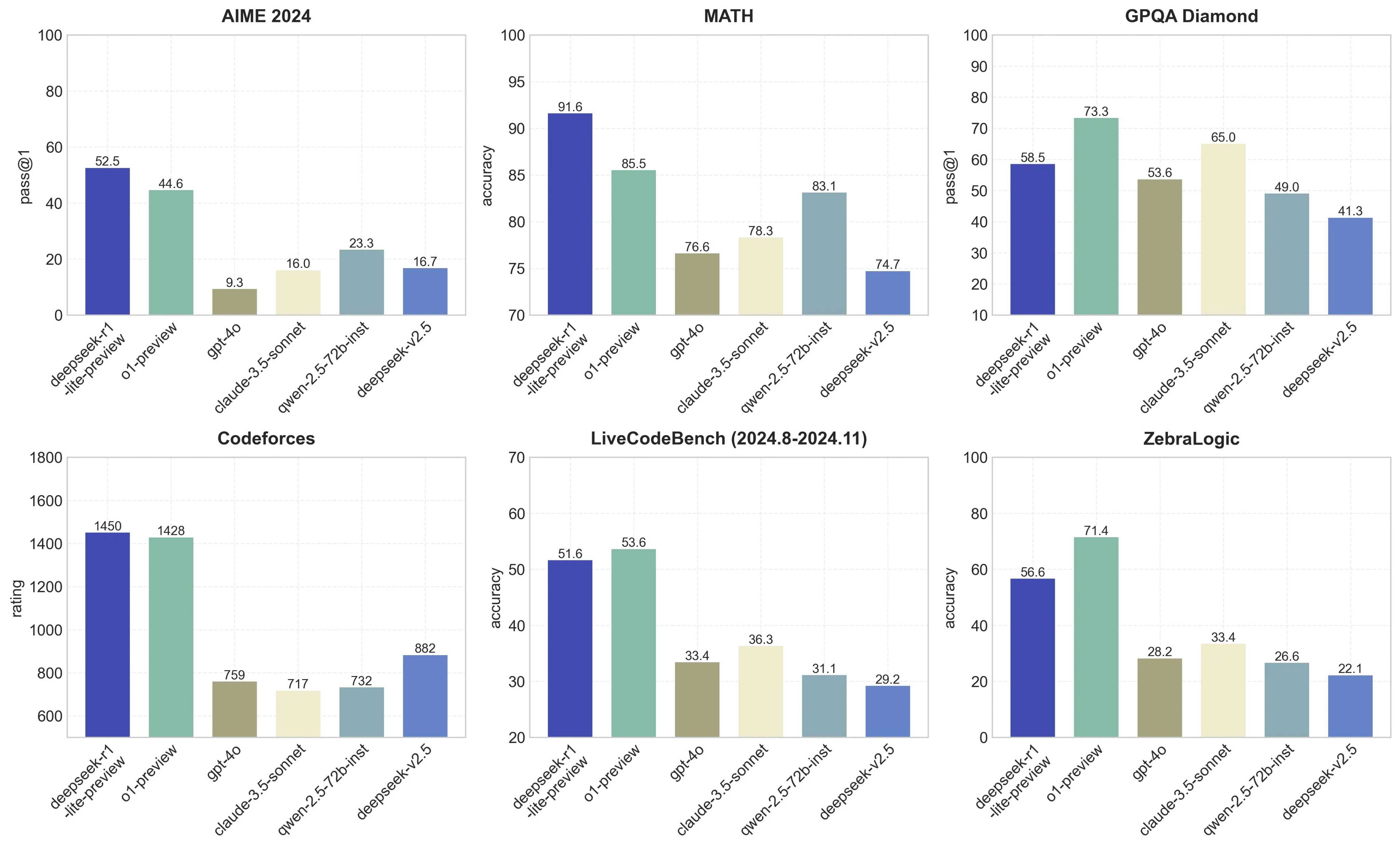

This paper examines how large language models (LLMs) can be used to generate and reason about code, but notes that the static nature of those fashions' knowledge does not mirror the fact that code libraries and APIs are always evolving. This can be a Plain English Papers summary of a research paper called CodeUpdateArena: Benchmarking Knowledge Editing on API Updates. The paper presents a new benchmark called CodeUpdateArena to test how effectively LLMs can update their data to handle changes in code APIs. The paper presents the CodeUpdateArena benchmark to test how properly giant language fashions (LLMs) can replace their data about code APIs which might be continuously evolving. By enhancing code understanding, technology, and modifying capabilities, the researchers have pushed the boundaries of what giant language models can achieve in the realm of programming and mathematical reasoning. The CodeUpdateArena benchmark represents an necessary step ahead in evaluating the capabilities of giant language models (LLMs) to handle evolving code APIs, a important limitation of current approaches. Livecodebench: Holistic and contamination Free DeepSeek online evaluation of giant language fashions for code.

This paper examines how large language models (LLMs) can be used to generate and reason about code, but notes that the static nature of those fashions' knowledge does not mirror the fact that code libraries and APIs are always evolving. This can be a Plain English Papers summary of a research paper called CodeUpdateArena: Benchmarking Knowledge Editing on API Updates. The paper presents a new benchmark called CodeUpdateArena to test how effectively LLMs can update their data to handle changes in code APIs. The paper presents the CodeUpdateArena benchmark to test how properly giant language fashions (LLMs) can replace their data about code APIs which might be continuously evolving. By enhancing code understanding, technology, and modifying capabilities, the researchers have pushed the boundaries of what giant language models can achieve in the realm of programming and mathematical reasoning. The CodeUpdateArena benchmark represents an necessary step ahead in evaluating the capabilities of giant language models (LLMs) to handle evolving code APIs, a important limitation of current approaches. Livecodebench: Holistic and contamination Free DeepSeek online evaluation of giant language fashions for code.

If you loved this information and you would certainly such as to receive additional info relating to DeepSeek Chat kindly check out our site.

- 이전글9 Reasons People Laugh About Your Deepseek 25.03.21

- 다음글A short Course In Deepseek China Ai 25.03.21

댓글목록

등록된 댓글이 없습니다.