The Ten Commandments Of Deepseek

페이지 정보

본문

DeepSeek Chat has two variants of 7B and 67B parameters, which are educated on a dataset of two trillion tokens, says the maker. There isn't a question that it represents a significant enchancment over the state-of-the-art from simply two years in the past. By 2021, High-Flyer was exclusively using AI for its trading, amassing over 10,000 Nvidia A100 GPUs earlier than US export restrictions on AI chips to China had been imposed. The AP took Feroot’s findings to a second set of laptop specialists, who independently confirmed that China Mobile code is present. Overall, the current creator was personally stunned at the standard of the DeepSeek responses. This technique samples the model’s responses to prompts, which are then reviewed and labeled by humans. For perspective, Nvidia lost more in market value Monday than all but thirteen corporations are worth - period. The outstanding reality is that DeepSeek-R1, regardless of being far more economical, performs practically as effectively if not higher than different state-of-the-art programs, including OpenAI’s "o1-1217" system. There are a number of ways to name the Fireworks API, together with Fireworks' Python client, the remainder API, or OpenAI's Python client. Other governments have already issued warnings about or placed restrictions on the usage of DeepSeek, including South Korea and Italy.

If we drive balanced routing, we lose the ability to implement such a routing setup and need to redundantly duplicate data across completely different experts. 4. MATH-500: This assessments the flexibility to solve difficult excessive-faculty-level mathematical problems, usually requiring vital logical reasoning and multi-step solutions. Available now on Hugging Face, the mannequin presents users seamless entry through net and API, and it seems to be essentially the most advanced large language mannequin (LLMs) at the moment obtainable within the open-source panorama, in line with observations and exams from third-social gathering researchers. The evaluation solely applies to the online model of DeepSeek. The online login web page of DeepSeek online’s chatbot incorporates closely obfuscated laptop script that when deciphered exhibits connections to laptop infrastructure owned by China Mobile, a state-owned telecommunications company. In its privateness coverage, DeepSeek acknowledged storing information on servers contained in the People’s Republic of China. This general strategy works as a result of underlying LLMs have got sufficiently good that if you happen to undertake a "trust but verify" framing you can let them generate a bunch of artificial data and simply implement an strategy to periodically validate what they do.

If we drive balanced routing, we lose the ability to implement such a routing setup and need to redundantly duplicate data across completely different experts. 4. MATH-500: This assessments the flexibility to solve difficult excessive-faculty-level mathematical problems, usually requiring vital logical reasoning and multi-step solutions. Available now on Hugging Face, the mannequin presents users seamless entry through net and API, and it seems to be essentially the most advanced large language mannequin (LLMs) at the moment obtainable within the open-source panorama, in line with observations and exams from third-social gathering researchers. The evaluation solely applies to the online model of DeepSeek. The online login web page of DeepSeek online’s chatbot incorporates closely obfuscated laptop script that when deciphered exhibits connections to laptop infrastructure owned by China Mobile, a state-owned telecommunications company. In its privateness coverage, DeepSeek acknowledged storing information on servers contained in the People’s Republic of China. This general strategy works as a result of underlying LLMs have got sufficiently good that if you happen to undertake a "trust but verify" framing you can let them generate a bunch of artificial data and simply implement an strategy to periodically validate what they do.

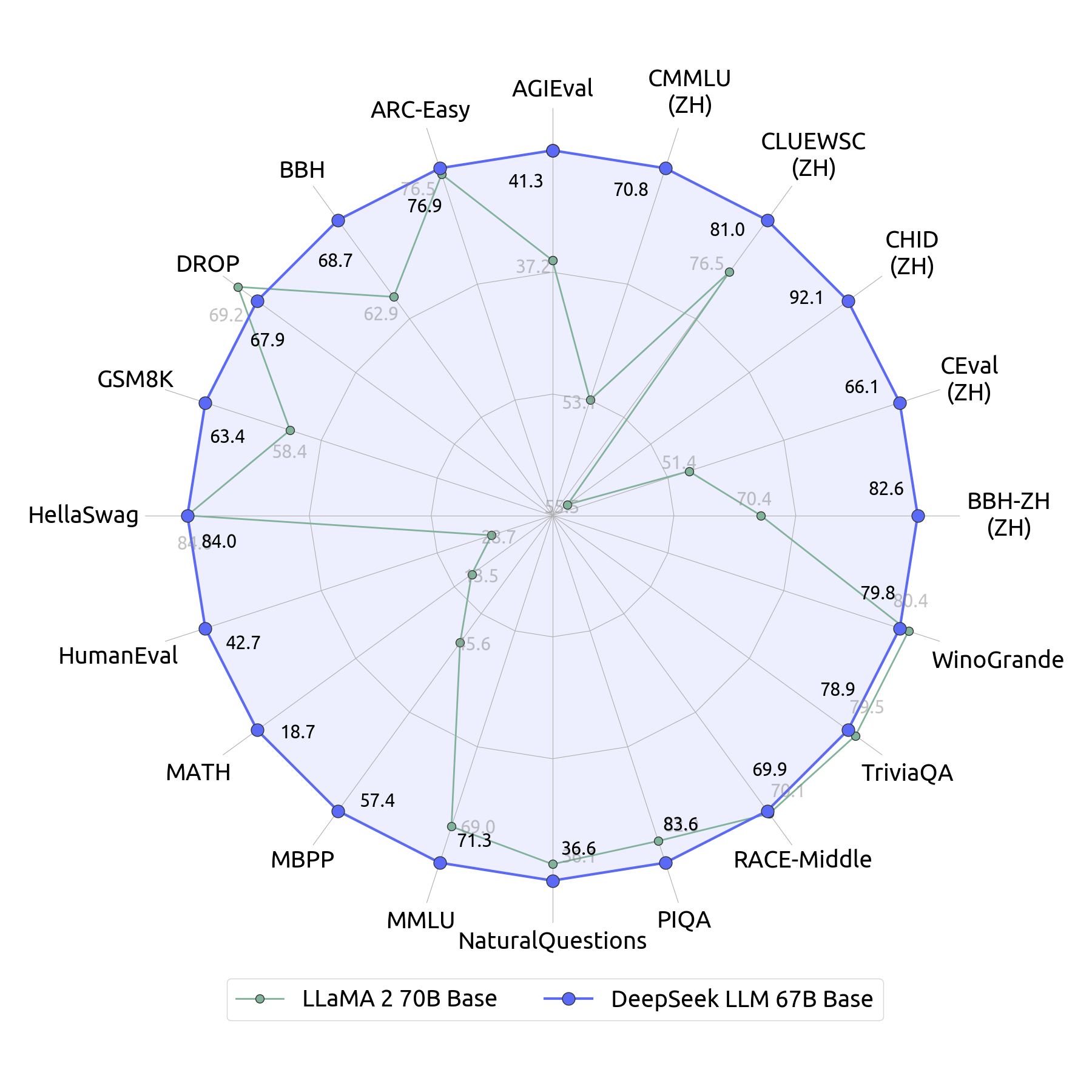

Individuals who tested the 67B-parameter assistant mentioned the software had outperformed Meta’s Llama 2-70B - the current greatest we've got within the LLM market. Comprising the DeepSeek LLM 7B/67B Base and DeepSeek LLM 7B/67B Chat - these open-source fashions mark a notable stride forward in language comprehension and versatile utility. At an economical value of only 2.664M H800 GPU hours, we complete the pre-training of DeepSeek-V3 on 14.8T tokens, producing the currently strongest open-source base mannequin. In line with their benchmarks, Sky-T1 performs roughly on par with o1, which is impressive given its low training price. While inference costs drop, excessive-end coaching and advanced AI fashions would possible proceed to justify heavy investment, ensuring that spending on chopping-edge AI capabilities stays sturdy. A distinctive side of DeepSeek-R1’s training process is its use of reinforcement studying, a way that helps enhance its reasoning capabilities. 2. CodeForces: A competition coding benchmark designed to precisely consider the reasoning capabilities of LLMs with human-comparable standardized ELO rankings.

By focusing on the semantics of code updates quite than just their syntax, the benchmark poses a more difficult and sensible check of an LLM's means to dynamically adapt its information. 5. MMLU: Massive Multitask Language Understanding is a benchmark designed to measure data acquired during pretraining, by evaluating LLMs solely in zero-shot and few-shot settings. A year after ChatGPT’s launch, the Generative AI race is filled with many LLMs from various firms, all making an attempt to excel by providing the very best productiveness instruments. Regex is either your greatest friend or your worst enemy. While it’s praised for it’s technical capabilities, some famous the LLM has censorship issues! Competing onerous on the AI front, China’s Deepseek Online chat AI introduced a brand DeepSeek Chat new LLM called DeepSeek Chat this week, which is more highly effective than some other current LLM. But its chatbot seems more instantly tied to the Chinese state than beforehand identified through the hyperlink revealed by researchers to China Mobile. An X user shared that a query made concerning China was mechanically redacted by the assistant, with a message saying the content was "withdrawn" for safety causes.

- 이전글How one can Grow Your High Stakes Download Link Http Dl Highstakesweeps Com Income 25.02.24

- 다음글See What Cabin Bed With Stairs Tricks The Celebs Are Utilizing 25.02.24

댓글목록

등록된 댓글이 없습니다.