Easy methods to Get (A) Fabulous Deepseek Ai News On A Tight Finances

페이지 정보

본문

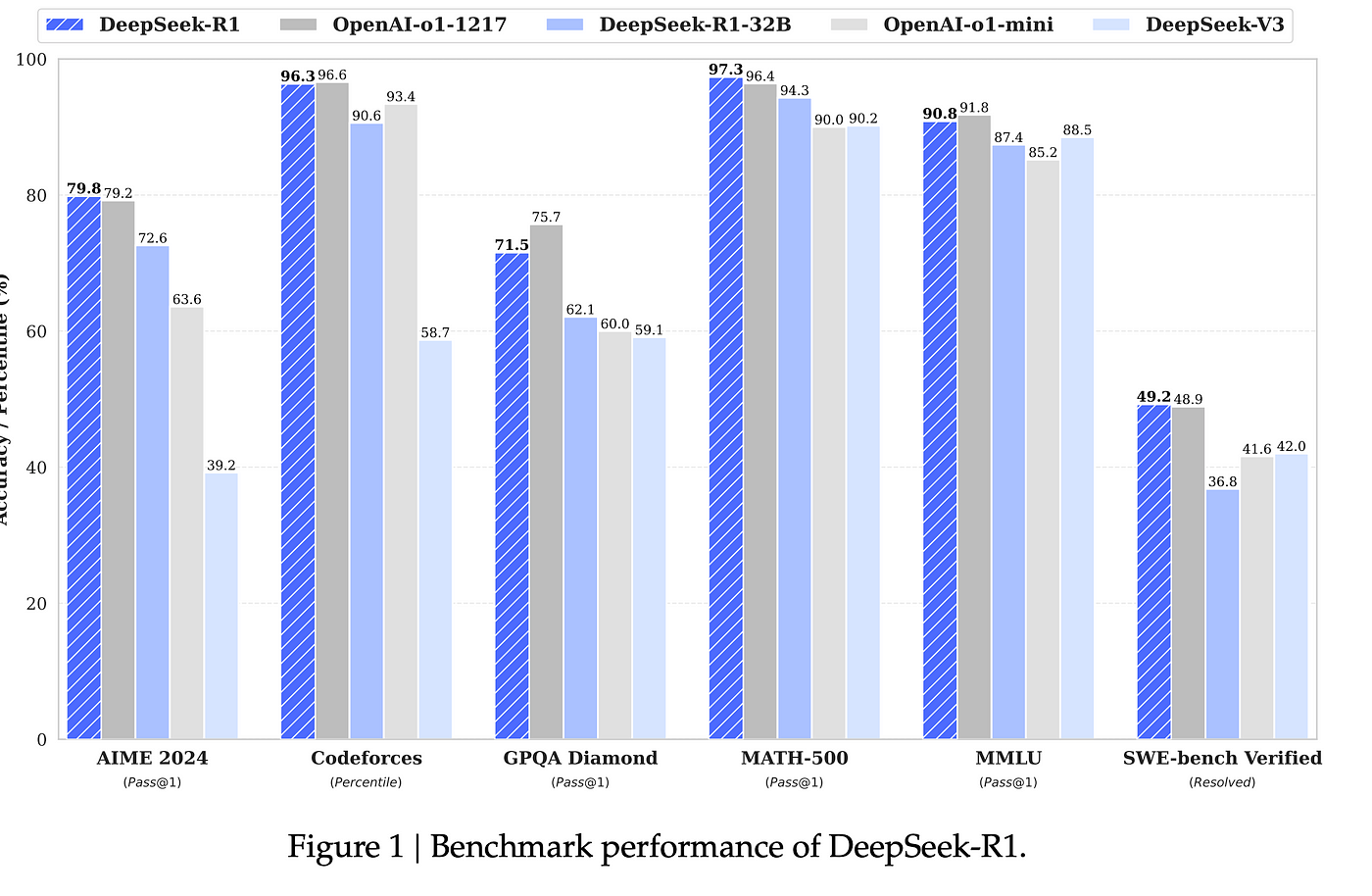

While many U.S. and Chinese AI companies chase market-pushed applications, DeepSeek’s researchers deal with foundational bottlenecks: enhancing coaching effectivity, decreasing computational prices and enhancing mannequin generalization. DeepSeek achieved environment friendly training with considerably less sources in comparison with different AI fashions by using a "Mixture of Experts" architecture, where specialized sub-models handle different duties, successfully distributing computational load and solely activating relevant components of the model for every input, thus lowering the necessity for large quantities of computing power and information. Well, it's not a fantastic day for AI buyers, and NVIDIA particularly, since the Chinese firm DeepSeek r1 has managed to disrupt trade norms with its newest R1 AI model, which is alleged to alter the concept of mannequin training and the assets involved behind it. DeepSeek’s breakthroughs have been in attaining larger efficiency: getting good results with fewer sources. Founded in 2023, DeepSeek has achieved its outcomes with a fraction of the cash and computing power of its opponents.

While many U.S. and Chinese AI companies chase market-pushed applications, DeepSeek’s researchers deal with foundational bottlenecks: enhancing coaching effectivity, decreasing computational prices and enhancing mannequin generalization. DeepSeek achieved environment friendly training with considerably less sources in comparison with different AI fashions by using a "Mixture of Experts" architecture, where specialized sub-models handle different duties, successfully distributing computational load and solely activating relevant components of the model for every input, thus lowering the necessity for large quantities of computing power and information. Well, it's not a fantastic day for AI buyers, and NVIDIA particularly, since the Chinese firm DeepSeek r1 has managed to disrupt trade norms with its newest R1 AI model, which is alleged to alter the concept of mannequin training and the assets involved behind it. DeepSeek’s breakthroughs have been in attaining larger efficiency: getting good results with fewer sources. Founded in 2023, DeepSeek has achieved its outcomes with a fraction of the cash and computing power of its opponents.

US officials claimed the app is a supposed "national security" threat - their favourite excuse to justify imposing restrictions on Silicon Valley’s Chinese opponents. The startup's chatbot surged to change into essentially the most downloaded free Deep seek app on Apple's U.S. DeepSeek says its mannequin was developed with current expertise together with open source software that can be utilized and shared by anybody without spending a dime. Practical regular expression matching freed from scalability and performance barriers. Typically, when a big language mannequin (LLM) is trained to not reply queries, it is going to typically reply that it's incapable of fulfilling the request. In a blog publish, AI model testing agency Promptfoo said, "Today we're publishing a dataset of prompts protecting delicate topics which are likely to be censored by the CCP. Data privacy emerges as another vital challenge; the processing of huge user-generated information raises potential exposure to breaches, misuse or unintended leakage, even with anonymization measures, risking the compromise of sensitive info. However, the projected growth of power consumption for storage and memory in these projections, is way lower than that required for GPU processing for AI fashions. But WIRED reviews that for years, DeepSeek founder Liang Wenfung's hedge fund High-Flyer has been stockpiling the chips that form the spine of AI - known as GPUs, or graphics processing items.

While most LLMs treat ethics as a reactive checkbox, DeepSeek bakes it into every response. But while the current iteration of The AI Scientist demonstrates a robust capability to innovate on top of properly-established ideas, equivalent to Diffusion Modeling or Transformers, it is still an open query whether such programs can finally propose genuinely paradigm-shifting ideas. Open the Applications folder, find Ollama, and double-click on to launch it. Our community is about connecting folks via open and considerate conversations. Deepseek’s environment friendly AI coaching has brought on a lot dialogue in the AI community and triggered volatility in AI related stocks. Thanks for studying our neighborhood tips. Sep sixteen 2023 LLM Apps: Don't get Stuck in an Infinite Loop! A year that started with OpenAI dominance is now ending with Anthropic’s Claude being my used LLM and the introduction of several labs which might be all making an attempt to push the frontier from xAI to Chinese labs like DeepSeek and Qwen. Why this issues - intelligence is the most effective protection: Research like this each highlights the fragility of LLM expertise as well as illustrating how as you scale up LLMs they seem to change into cognitively capable enough to have their very own defenses against bizarre attacks like this.

While most LLMs treat ethics as a reactive checkbox, DeepSeek bakes it into every response. But while the current iteration of The AI Scientist demonstrates a robust capability to innovate on top of properly-established ideas, equivalent to Diffusion Modeling or Transformers, it is still an open query whether such programs can finally propose genuinely paradigm-shifting ideas. Open the Applications folder, find Ollama, and double-click on to launch it. Our community is about connecting folks via open and considerate conversations. Deepseek’s environment friendly AI coaching has brought on a lot dialogue in the AI community and triggered volatility in AI related stocks. Thanks for studying our neighborhood tips. Sep sixteen 2023 LLM Apps: Don't get Stuck in an Infinite Loop! A year that started with OpenAI dominance is now ending with Anthropic’s Claude being my used LLM and the introduction of several labs which might be all making an attempt to push the frontier from xAI to Chinese labs like DeepSeek and Qwen. Why this issues - intelligence is the most effective protection: Research like this each highlights the fragility of LLM expertise as well as illustrating how as you scale up LLMs they seem to change into cognitively capable enough to have their very own defenses against bizarre attacks like this.

However, we shouldn't be surprised at advances like these made in developing Deepseek. However, these weren't the sort of refusals expected from a reasoning-targeted AI mannequin. Gadgets 360 employees members tested these prompts on DeepSeek and faced related refusals. LLaMa-10, driving a large dialog within the civilian theatre about how the system had a excessive number of refusals in some areas due to ‘woke’ security training and that this had additionally led to the era of ‘nonsense science’ as a direct casualty of ‘DEI safetyism’. You may limit the conversation context to an Org heading with `gptel-org-set-matter'. This may be in comparison with the estimated 5.8GW of energy consumed by San Francisco, CA. In different words, single data centers are projected to require as much energy as a large city. Maybe it doesn't take so much capital, compute, and power after all. And once more as I discussed, we're rather more laissez faire. The DeepSeek models’ excellent efficiency, which rivals these of the most effective closed LLMs from OpenAI and Anthropic, spurred a stock-market route on 27 January that wiped off more than US $600 billion from leading AI stocks.

If you have any issues concerning wherever and how to use Free DeepSeek r1, you can make contact with us at the website.

- 이전글The 9 Things Your Parents Teach You About Buy A Driving License 25.02.18

- 다음글What Will Address Collection Be Like In 100 Years? 25.02.18

댓글목록

등록된 댓글이 없습니다.